WYSK: 07/15/22

This Week: OGT: James Webb Telescope; 1. Uber Files; 2. Sentiment Analysis; 3. Ring Video; 4. Prison Labor

What you should know from the week of 07/15/22:

- One Good Thing: The James Webb Telescope!

- Uber Files: Leaked files from Uber prove a policy of criminality;

- Sentiment Analysis: Algorithms and AI depend on well-labeled test data--Google's sentiment analysis showcases the often-flimsy foundation of labeling;

- Ring Video: Predictably, Amazon's Ring doorbells used for warrantless surveillance;

- Prison Labor: Still trying to kick the habit, forced labor underpins American societies.

One Good Thing:

NASA revealed images from the James Webb Space Telescope this week. Wow! Some really glorious images.

Uber Files:

Harry Davies and the team at the Guardian, in collaboration with the International Consortium of Investigative Journalists (ICIJ) is publishing reporting derived from internal documents from Uber furnished by "Mark MacGann, Uber’s former chief lobbyist for Europe, the Middle East and Africa:"

The cache of files, which span 2013 to 2017, includes more than 83,000 emails, iMessages and WhatsApp messages, including often frank and unvarnished communications between Kalanick and his top team of executives.

Uber's tendency to violate the law whenever it believed it would be profitable was well-known, but these files show that lawless behavior resulted not merely from a culture of lawlessness, but from a policy of criminality:

Commenting on the tactics the company was prepared to deploy to “avoid enforcement”, another executive wrote: “We have officially become pirates.”

Nairi Hourdajian, Uber’s head of global communications, put it even more bluntly in a message to a colleague in 2014, amid efforts to shut the company down in Thailand and India: “Sometimes we have problems because, well, we’re just [f!@#ing] illegal.” Contacted by the Guardian, Hourdajian declined to comment.

Travis Kalanick's (the CEO of Uber at the time) response has largely been to argue that any tactics Uber engaged in were legal, and that Uber was required to bend and skirt the rules because they were the first to innovate in this way.

Scott Galloway's recent blog post on enablers was directed primarily at Elon Musk, but it applies well here too:

The “idolatry of innovators,” leads to the misguided notion that people (usually men) of great achievement (usually in tech) should not be criticized, are not bound by a code of ethics, and are above the law.

...

Since Steve Jobs, the gestalt in tech is that a talented, nice CEO … is talented. A talented CEO who is unreasonable is a genius. The powerful skirt guardrails, and remove them altogether with enablers.

Sentiment Analysis:

Edwin Chen from SurgeHQ (a company that sells services providing "highly trained data labelers and data labeling tools in 35+ languages") reports on flawed methodology in how Google scored a dataset.

Machine learning is dependent on data labeling: humans (usually) are used to comb through a bunch of data and label the data. The machine learning model is then trained on that dataset. Since the data has already been labeled, it is possible to track the performance of the model and see how accurately it is performing.

Google attempted to score nearly 60,000 comments from the internet super-forum Reddit, but performed poorly:

The problem? A whopping 30% of the dataset is severely mislabeled! (We tried training a model on the dataset ourselves, but noticed deep quality issues. So we took 1000 random comments, asked Surgers whether the original emotion was reasonably accurate, and found strong errors in 308 of them.) How are you supposed to train and evaluate machine learning models when your data is so wrong?

In this case, Google's methodology presented comments with no context, and used native English speakers from India. In the article SurgeHQ convincingly walks through the significant limitations of those two design choices. SurgeHQ's entire business is based around data labelling, and it sells services to Google and other companies. So it isn't a purely disinterested third-party here, but the complaints it has with Google's methodology are valid.

Two big takeaways from this article:

Society gifts too much trust to companies like Google merely because they are large.

Google made really poor decisions in their design here, assumedly to save on time and costs, and it resulted in a really poor outcome.

Society gifts too much trust to algorithm-based decision making because it is so opaque.

I've written on this before and in more detail with regard to Facebook, but the inscrutable nature of machine learning, combined with fantastic claims about the utopian improvements that can be expected from such innovation, causes people to forget that actual human beings are ultimately behind the decisions that are then amplified in AI/ML.

Ring Video:

POLITICO's Alfred Ng provides the excruciatingly predictable update that Amazon has used its Ring systems to move past warrantless surveillance (I wrote more on Amazon's courtship of local PDs last November), and expand into surveillance without even owner consent.

Amazon is using Ring footage without the consent of the doorbell owners:

Amazon handed Ring video doorbell footage to police without owners’ permission at least 11 times so far this year...

Ring only provided this information after being hassled by a US Senator:

The revelation came in a letter Amazon sent to Sen. Ed Markey (D-Mass.) on July 1 after the lawmaker questioned the video doorbell’s surveillance practices in June.

The surveillance issues arising from Ring and similar systems are growing rapidly. The Register reported this week that:

San Francisco lawmakers are mulling a proposed law that would allow police to use private security cameras – think: those in residential doorbells, medical clinics, and retail shops – in real time for surveillance purposes.

This increasing trend shows and accelerates a surrender of rights, and is antithetical to American values of distrusting and limiting the executive power of the State.

Prison Labor:

Speaking of things that are antithetical to American values, Jimmy Jenkins from AZ Central reports on Arizona's stated dependence on prison labor:

In Arizona, all people in state prisons are forced to work 40 hours a week with exceptions for prisoners with health care conditions and other conflicting programming schedules. Some prisoners earn just 10 cents an hour for their work.

...

“Yes. The department does more than just incarcerate folks,” [Arizona Department of Corrections Director David] Shinn replied. “There are services that this department provides to city, county, local jurisdictions, that simply can't be quantified at a rate that most jurisdictions could ever afford. If you were to remove these folks from that equation, things would collapse in many of your counties, for your constituents.”

Well, it is antithetical to our ideals, at least, although it is hideously in line with the worst parts of American history. In our legal system in America we did outlaw slavery, thank God, but we haven't fully excised it from the fabric of our culture and society.

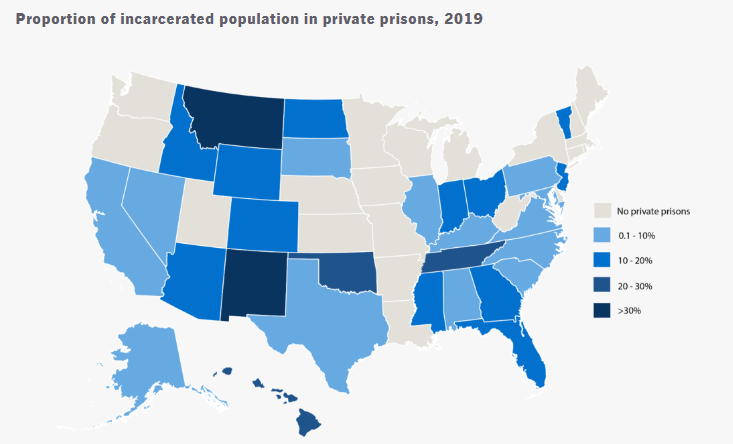

The whole article digs into the for-profit prison industry. The map below comes from the Sentencing Project, and shows how different US states leverage private prisons.

Private prisons make us a less just society. The good news is that states are growing increasingly opposed to the use of private prisons, and the proportion of people imprisoned in private prisons is decreasing! The bad news is that so many states still use them, and that prisons are still permitted to exploit prisoners for profit.

Happily, you can call your congressmembers and city council members and make this a priority, read blogs like More Than Our Crimes to humanize prisoners and gain a better understanding of who they are, donate to prison libraries, and volunteer at prisons through federal and local programs.

Interest piqued? Disagree? Reach out to me at TwelveTablesBlog [at] protonmail.com with your thoughts.

Photo by Tamara Gak on Unsplash