WYSK: 12/31/21

This Week: 1. Alexa Challenge; 2. Housing Realities; 3. AI Companions; 4. Facebook Quotes

What you should know from the week of 12/31/21:

- Alexa Challenge: Amazon recommends "Penny Challenge" to child, showcasing harm caused by irresponsibility of the regurgitation business model;

- Housing Realities: Across America NLIHC research shows housing is not affordable;

- AI Companions: Who is served by visions for an AI-empowered future;

- Facebook Quotes: The top 10 quotes from Facebook/Meta employees in 2021.

Alexa Challenge:

Eric Bangeman from Ars Technica reported on an interesting story this week where Amazon's voice assistant provided a dangerous suggestion to a child:

A 10-year-old girl and her mother got a lesson about the utility of voice assistants after Amazon's Alexa suggested the girl try the TikTok plug challenge.

...

For the (blessedly) uninitiated, the plug challenge consists of partially plugging a phone charger into an electrical outlet and then dropping a penny onto the exposed prongs. Results can run the gamut from a small spark to a full-on electrical fire.

Other news sites like the Verge have provided additional reporting on some of the technical bits behind the recommendation, but I'll offer up my quick takeaway from the event:

Many tech companies today rely on a regurgitation business model of creating platforms to solicit content or scraping the internet for content, and then in turn offering that up to users. While this business model has provided such companies shields from liability on the grounds that the companies did not produce the content themselves, it is not good for society.

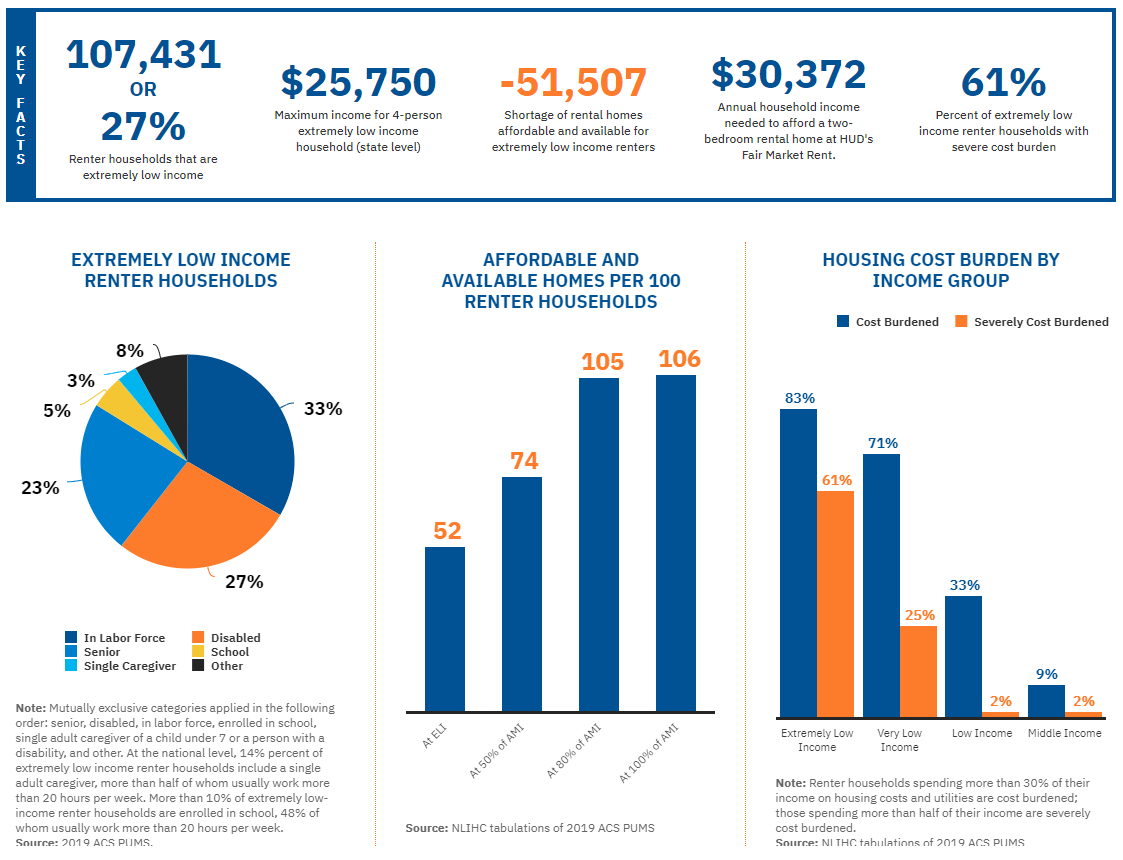

Housing Realities:

The US is facing a housing crisis, but how serious is that crisis really? Is it just wealthy millennials from California moving during COVID and raising housing prices? Can't people just budget better in order to afford housing?

The National Low Income Housing Coalition has an excellent tool--called "Out of Reach"--for analyzing housing costs in the US. The data was staggering to me. I strongly recommend you spend a few minutes clicking through the tool.

"Out of Reach" relies on data in their full report. Page 11 of the report walks through critical definitions used in the tool and in their reporting; I've included summaries of some definitions inline at the end of this section of WYSK.

You can click on a state, or even enter in a locality or zip code in order to look at housing cost details for a given area. For example, Arkansas is listed as the most affordable state in the US, and you can see details for Arkansas here; by clicking on the "Connect to Network" option on that page you can expand further details like what is shown here:

One key takeaway is that broadly across the states the vast majority of Extremely Low Income Renter Households are in the labor force, or a senior, or disabled. That is, these are people that are working or are unable to work.

It is not the case that America's housing issues are driven by laziness, or poor financial management skills.

NLIHC further notes that "In only 218 counties out of more than 3,000 nationwide can a full-time worker earning the minimum wage afford a one-bedroom rental home at the Fair Market Rent."

Definitions from the Report (page 11):

"Affordability in this report is consistent with the federal standard that no more than 30% of a household’s gross income should be spent on rent and utilities. Households paying over 30% of their income are considered cost-burdened. Households paying over 50% of their income are considered severely cost-burdened.

Fair Market Rent (FMR) is typically the 40th percentile of gross rents for standard rental units. FMRs are determined by HUD on an annual basis, and reflect the cost of shelter and utilities. FMRs are used to determine payment standards for the Housing Choice Voucher program and Section 8 contracts.

Housing Wage is the estimated fulltime hourly wage workers must earn to afford a decent rental home at HUD’s Fair Market Rent while spending no more than 30% of their income on housing costs.

Extremely Low Income (ELI) refers to earning less than the poverty level or 30% of Area Median Income (AMI).

Full-time work is defined as 2,080 hours per year (40 hours each week for 52 weeks). The average employee works roughly 35 hours per week, according to the Bureau of Labor Statistics.

Renter wage is the estimated mean hourly wage among renters, based on 2019 Bureau of Labor Statistics wage data, adjusted by the ratio of renter household income to the overall median household income reported in the ACS and projected to 2021."

AI Companions:

Yana Zalesskaya in Fortune this week wrote a stunning article on 'AI companions'.

Her thesis paragraph notes that A.I. can enhance human lives, and she does (perhaps obliquely) note that A.I. will execute the goals and biases of its designers and providers:

Valid questions aside, the truth is that A.I. has the power to enhance, not diminish, human potential. It can transform our experiences for the better, optimize our capabilities, and help us instill habits to become healthier and potentially even happier. It can be our friend, not our worst enemy—but only when it is designed and delivered thoughtfully.

So far so good, but then the article takes a sudden shift. I know block quotes can be a pain, but please do read the next two paragraphs (emphasis mine):

Imagine a future where people elect to have an A.I. companion whose relationship with you begins at birth, reading everything from your grades at school to analyzing your emotions after social interactions. Connecting your diary, your medical data, your smart home, and your social media platforms, the companion can know you as well as you know yourself. It can even become a skilled coach helping you to overcome your negative thinking patterns or bad habits. It can provide guidance and gently nudge you towards what you want to accomplish, encouraging you to overcome what’s holding you back.

Drawing on data gathered across your lifetime, a predictive algorithm could activate when you reach a crossroads. Your life trajectory, if you choose to study politics over economics, or start a career in engineering over coding, could be mapped before your eyes. By illustrating your potential futures, these emerging technologies could empower you to make the most informed decisions and help you be the best version of yourself.

There are three critical issues with these paragraphs:

- The proposed consent is impossible, and that "election" could never be conducted by the subject.

- An A.I. must draw from previous data to make predictions. Since there has never been a previous "you" an A.I. will never be able to help you be a "better you."

- A.I.'s recommendations will be constrained and restrained by its designers; an A.I. will be serving its designers, and the benefits to society will be limited by the intentions of the designers, their wisdom and technical ability, and the capabilities of technology.

The proposed consent is impossible:

Let's reread that first sentence from the block quote above: "...people elect to have an A.I. companion whose relationship with you begins at birth." While consent is implied in the first reading, it becomes obvious upon reflection that it is impossible for someone to apply informed consent at or before one's own birth.

A.I. cannot predict You:

An A.I. will be unable to help you to be the best version of yourself. Since there has never been a previous "you" A.I. will only be able to extrapolate based on other people.

At best an A.I. will be able to help you to be an idealized aggregation of people the algorithm deems similar to you. At worst an A.I. will merely direct you into variations of intentionally predefined channels determined by the designers of the A.I.

A.I. designers will constrain and restrain possible courses of action:

In any event, some designer will program the A.I. with prioritized and/or forbidden aspirations: Hitlerian or Stalinesque goals must be locked out of the A.I., while other goals may be prioritized. But ultimately the possible recommendations of the A.I. will be constrained and restrained by law and virtue, and someone will be the person to identify and program those constraints and restraints in.

How would a John Lewis, a Martin Luther King Jr, a George Washington, a Gandhi, a William Wilberforce, a Cincinnatus—or countless others who broke laws, defied social norms at great personal cost, engaged in violent revolutions, or sacrificed personal ambition for the public good—ever form?

Facebook Quotes:

As we close out the year, we wanted to share our Top 10 Facebook quotes we've seen in 2021. Some of these are incredible... pic.twitter.com/R4t5GqrMbQ

— Accountable Tech (@accountabletech) December 28, 2021

-

Individual humans are the ones who choose to believe or not believe a thing. They are the ones who choose to share or not share a thing.

-Meta's incoming CTO Andrew Bosworth in a December 2021 interview

This is certainly true; but it is not complete and does not reflect Meta/Facebook's actual beliefs. Facebook/Meta makes its money by selling ads--that is, Facebook owes its existence to the belief that people can be influenced into taking actions or adopting beliefs that they would not on their own.

Facebook's famous psychological experiment showed that Facebook can change user behavior (in this case, cause users to post different kinds of content), and studies in collaboration with Facebook have shown that Facebook was successful in increasing voter turnout.

With their right hand Facebook/Meta seeks to modify user behavior and sell services to advertising customers based on Meta's ability to influence users; but with their left hand Meta seeks to toss all responsibility back on to users.

-

We make body image issues worse for one in three girls.

-Internal Facebook research on Instagram's harm to teens from 2019

Much-reported on from the Frances Haugen revelations.

-

We are not actually doing what we say we are doing publicly.

-Internal Facebook review on their XCheck whitelisting practices from 2019

I wrote more on this previously here.

-

On average we make more money when people spend more time on our platform because we're an advertising business.

-Head of Instagram Adam Mosseri in a December 2021 Congressional hearing

Facebook/Meta does not like to admit this; this may actually be the first time it has been publicly stated by Meta leadership. This admission clashes with Meta talking points (for example Nick Clegg's assertion that "[Our] goal is to make sure you see what you find most meaningful — not to keep you glued to your smartphone for hours on end.").

As a business, Meta's goal is to bring in the largest possible profits. If Meta generally makes more money when users spend more time on Meta-owned platforms, then the business will optimize to cause users to spend more time on their platforms.

-

Our ability to detect (vaccine hesitancy) in comments is bad in english--and basically nonexistent everywhere else.

-Internal Facebook memo from March 2021

Facebook applauds itself publicly for doing a good job of detecting harmful content (which they classify vaccine hesitancy as), but privately admits its low abilities.

-

We know that more people die than would otherwise because of car accidents, but by and large, cars create way more value in the world than they destroy. And I think social media is similar.

-Head of Instagram Adam Mosseri in a September 2021 interview

This sentiment echoes Bosworth's "The Ugly" memo where he states that "We connect people. Period. That’s why all the work we do in growth is justified. All the questionable contact importing practices. All the subtle language that helps people stay searchable by friends. All of the work we do to bring more communication in. The work we will likely have to do in China some day. All of it."

Communication is good, but Meta's social media platforms are optimized to increase Meta's profits by increasing engagement and overall time spent on their platforms. It is a false equivalency to say that the only two options society has are either no global communication tools, or Meta's rapacious platforms.

-

I think these events were largely oragnized on platforms that don't have our abilities to stop hate, don't have our standards, and don't have our transparency.

-Facebook COO Sheryl Sandberg in a January 2021 interview

That was an assumption, which has since been proven by reporting from ProPublica and the Washington Post, as well as additional reporting from other outlets to be incorrect.

-

History will not judge us kindly.

-Facebook employee on an internal message board during the January 6, 2021 insurrection

-

Two year for a coup, not bad.

-Facebook employee after Facebook's decision to suspend Donald Trump's accounts for only two years

Neither this nor quote 8 are that significant. Meta employees being aware of wrongdoing and offering milquetoast statements of concern internally is not impactful.

-

Why do we care about tweens? They are a valuable but untapped audience.

-Internal facebook memo from 2020

Tweens are children in their pre-teenage years, generally 9-12 years old. So I'm going to restate this quote with that information included to provide better context: 'Why do we care about 9-12 year old children? They are a valuable but untapped audience.'

Not only are children protected by federal laws like COPPA, but people are not merely reservoirs of untapped value for businesses.

Interest piqued? Disagree? Reach out to me at TwelveTablesBlog [at] protonmail.com with your thoughts.

Photo by Tamara Gak on Unsplash